ifCEM: Redefining How You Work

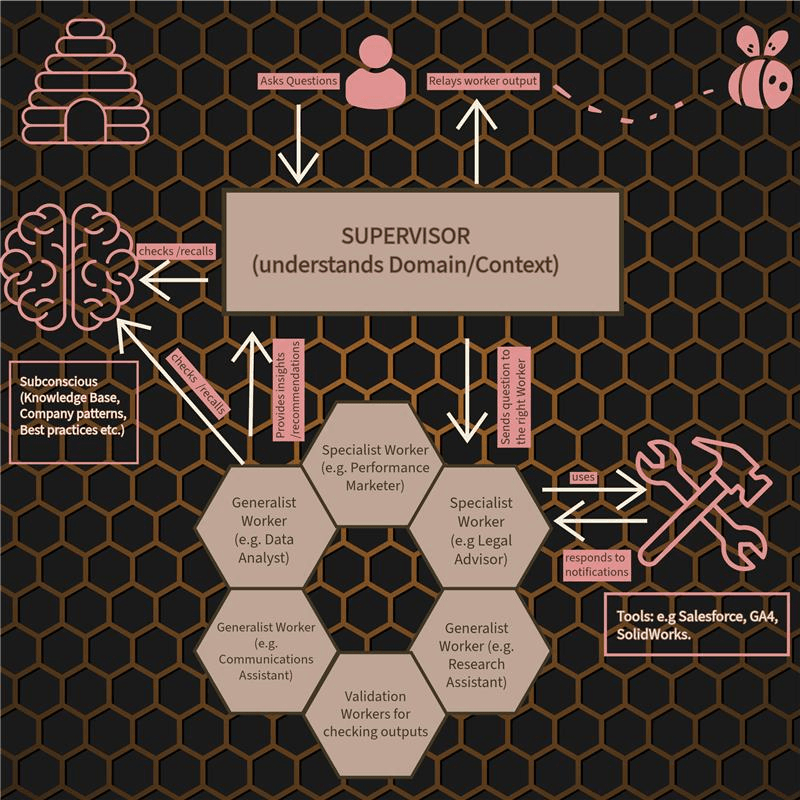

Like a beehive, ifCEM assembles and coordinates the right workers for every task. ifCEM is constantly adapting and becoming smarter like a hive mind would. Each decision is validated by hive intelligence. This is the future of how work gets done.

Intelligence as a function of context, experience, and modularity: I = f(c+e+m). Rules are enforced before the system responds. Trust from design, not from bigger models or prompts.

AI looks impressive. But it fails inside real organisations.

We see it again and again: the gap between demos and real work is where most problems begin. At DataMPowered we work with organisations who find that once AI moves beyond pilots, it often fails — not because it isn't smart, but because it doesn't know what matters here, what's allowed, or when to stop.

What matters

AI doesn't know what matters in your organisation — your goals, your data, your way of working. So it gives generic answers instead of relevant ones.

What's allowed

It doesn't know what it's allowed to do in this workspace, for this role, or under your policies. So you can't safely let it act.

When to stop

It doesn't know when it should stop, ask for clarification, or hand off. So it guesses — and in business, guessing creates risk.

The consequence

When AI lacks context, it starts guessing. Guessing leads to hallucinations. Hallucinations create risk and loss of confidence.

That's why we built ifCEM. We believe trust doesn't come from how intelligent an AI sounds — it comes from how its behaviour is governed. Rules before responses; a central layer that decides what's allowed; behaviour designed for your context, not just trained on data. So AI can move from demos into everyday operations, safely.

Trust comes from governance, not intelligence. That's the foundation everything we build sits on.

How ifCEM works

ifCEM is intelligence as a function of context, experience, and modularity. It's a way of designing AI so it behaves appropriately, not just intelligently. Like a beehive: a Supervisor orchestrates which worker bees and tools are used; each worker has a defined role and executes only what's authorised. Rules before responses.

I = f(c+e+m) — intelligence as a function of context, experience, and modularity

The Supervisor doesn't do the work alone — it routes tasks to the right workers, each with expertise and access to the right tools. Nothing runs until the right step is authorised. Behaviour is governed in real time, not observed after the fact.

Trust & governance · The hive runs on rules

- Rules before responses

- Only authorised tasks are executed

- Full audit trail

- Your data and tools stay in your control

Worker bees

Below are some of the worker bees the Supervisor can call on. Each operates within clear boundaries and your governance rules, so you get capability without the risk.

Quality Inspector

The platform's quality checker for all output. Validates each step before it moves on; ensures consistency, tone, and policy alignment across everything the hive produces — not just one document, but the whole pipeline.

Document Organiser

Keeps your knowledge base structured and findable; categorises and versions documents so the hive can use the right information at the right time.

Research Assistant

Gathers and verifies information against your allowed sources; compiles findings so decisions are evidence-based and traceable.

Data Analyst

Surfaces trends and outcomes using your data and approved methods; informs decisions with governed, auditable analysis.

Communications Assistant

Drafts and refines messaging within your brand and compliance guardrails; tone and audience are checked before anything goes out.

Performance Marketer

Runs campaigns and optimises metrics within defined channels and budgets; creative and audience tests stay within your governance rules.

Legal Advisor

Supports contract and compliance work; keeps regulatory alignment and documentation in one place, with changes tracked and authorised.

Scientific Researcher

Validates methods and impact using evidence; suggests improvements that fit your standards and leave a clear audit path.

Financial Analyst

Builds models and forecasts using your data and approved assumptions; budgets and recommendations are governed and traceable.

Frequently asked questions

Quick answers about ifCEM, DataMPowered, and how we think about trusted AI.

ifCEM is our AI workspace platform. It's built on the idea that intelligence is a function of context, experience, and modularity: I = f(c+e+m). Like a beehive, a Supervisor orchestrates specialist workers and tools; each worker has a defined role and only runs what's authorised. Rules are enforced before the system responds, so behaviour is governed in real time.

Most AI tools are trained to be smart; we design ifCEM to be trusted. Trust comes from design — governed behaviour, clear rules, and the right architecture — not from bigger models or clever prompts. The Supervisor-and-workers model separates control from execution, so you get capability without the risk of ungoverned automation.

It means we build trust into how the system works, not only into how it's trained. Rules before responses; only authorised tasks run; every step can be audited. Your data and tools stay in your control. So you can move from demos into real operations because behaviour is designed to be safe, not just observed after the fact.

A Supervisor understands your request and routes work to the right specialist workers — each with a defined role and access to the right tools. Nothing runs until the right step is authorised. Workers (e.g. Quality Inspector, Research Assistant, Data Analyst) execute within clear boundaries. Think of it like a beehive: the Queen Bee orchestrates; worker bees do their jobs within the hive's rules.

We're in beta and opening access to early adopters. If you're interested in trying ifCEM in your organisation, request beta access via our contact form. We'll get in touch to discuss fit and next steps.

DataMPowered is the company behind ifCEM. We're building a malleable AI workspace where assistants and systems adapt in real time to show up with exactly what you need — with behaviour that's governed, auditable, and designed for trust.

Trusted intelligence is designed, not trained.

Trust comes from design — from governed behaviour, clear rules, and the right architecture — not from bigger models or clever prompts.